Automating Log Analysis With LogParser, Log Parser Lizard And SendEmail

It has to be the most annoying and difficult task any admin (or otherwise) needs to perform. The task of viewing and gleaning information from log files. I’ll look at the two more common types of log files that Windows users often have to look into: IIS and Event Viewer logs. How to get the data you need out of them, and how to analyze the information in them with tools that are freely available. Once you have the data, how to get it out (via email). You may choose to use commercial utilities such as Sawmill – but you might be surprised at how much can be done for free!

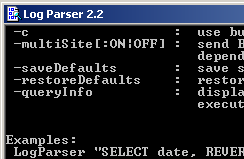

The key to all this is the free utility – Microsoft Log Parser – if you haven’t seen or heard of this tool – do yourself a favor and look into it. Go download it and try it out. Learn how to use it. You can get all sorts of information on the Internet on how to use the tool, and how run some basic queries. Log Parser can be a difficult tool to learn to do what you want – but when you really get to know the tool – you’ll swear by it. Most admins of Windows have used this tool or know of it – but may not have had time to learn the specifics. One side note, it would be helpful if you placed the path to logparser.exe (in most cases c:PROGRA~1LOGPAR~1.2) in your system PATH. This will really reduce the length of your command line as you work with Log Parser. Also make sure the above path does NOT have a space at the end or the command shell won’t find the executable.

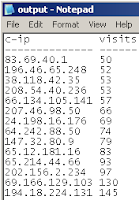

logparser "select c-ip, count(*) as visits into output.txt from *.log group by c-ip having count(*) >= 50 order by visits" -i:iisw3c -rtp:-1

This will place the IP address and count (or hits) into a file named output.txt – this command uses the as “string” to name a column and sort it. You’ll get something like the image at left (using data that was provided here)

You can certainly get more involved by separating the SQL from the command line using the LogParser command such as this to call it:

logparser file:cmd.sql -i:iisw3c -rtp:-1

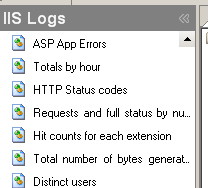

An interesting free utility – the Log Parser Lizard GUI simplifies the process of using Log Parser. The great thing is that you can get a large amount of information in a short amount of time. After you’ve installed Log Parser, run this free program and get all sorts of quick information and graphs. This information is split up into IIS logs and various other local gathering tools. What’s amazing though, is that you can manage the queries and get details on the various SQL statements that each command uses. One such query, to get a list of client-requested URLs on your server. Looks like this:

SELECT STRCAT( cs-uri-stem, REPLACE_IF_NOT_NULL(cs-uri-query,

STRCAT('?',cs-uri-query))) AS Request,

STRCAT( TO_STRING(sc-status),

STRCAT( '.',COALESCE(TO_STRING(sc-substatus), '?' ))) AS Status,

COUNT(*) AS Total FROM #IISW3C#

WHERE (sc-status >= 400)

GROUP BY Request, Status

ORDER BY Total DESC

Let’s say I want to get all of the recent system event errors in the last 7 days and place that in a file that I would like to email myself. You should create a folder for all this – I’ll call it c:data in my example.

I create my output with Log Parser using this command:

LogParser -i:EVT "SELECT * into c:\data\output.txt FROM System WHERE EventType=1 AND TimeWritten >= TO_LOCALTIME(SUB( SYSTEM_TIMESTAMP(), TIMESTAMP('6', 'd') ) )"

To really make this useful, you will want to send output.txt by email. You can do this with the small free utility named sendEmail. II’m going to, in this example, send this email out once a week. If you manage servers this is one great, free, way – to keep track of errors that occur. If you manage multiple servers, try naming the output file the same as your server name.

I’ll give you an example of how to send the file C:\DATA\OUTPUT.TXT by email to addresses. You can add more email addresses by using commas:

sendEmail.exe -f fromuser@doman.com -t "touser1@domain.com, touser2@domain.com" -u "Log Report for Computer: XXXXX" -m "Please see attached file." -a c:\data\output.txt -s smtp.server.com -v

In the above example much of it is self-explanatory, however, be sure to know and test your SMTP server beforehand. I have not tested some of the advanced SMTP authentication features, but you do have the ability to pass that information. For help on the sendEmail command type sendemail /? from a command prompt that can find the application.

That’s the process – with all the tools mentioned above you have the ability to gather log information from places such as IIS and the Event Viewer and then take that output and send it to a configured email address. You can even automate this process using simple batch files and the Windows Task Scheduler. The power of being able to send this information externally can save you quite a bit of time when you need to understand a problem on a computer but don’t have immediate physical or remote access.As always, your thoughts, suggestions and interesting anecdotes are always welcome.