The Strange Case of Dell VRTX Hard Disk Compatibility [Solved]

Early June of this year, I ordered five more drives for a client’s Dell PowerEdge VRTX server enclosure, in addition to the currently installed seven 2TB drives that were humming along just fine. My only request from Dell is that these drives be the biggest we can get and that they be compatible with the running VRTX enclosure. Simple, right? So we ordered the $8,000 in hard drives and waited. The drives themselves would kick off a strange saga of failure and support that I can’t recall since working on another blade system, one made by IBM.

Update: This has been solved, the support does exist for these drives, and I was able to get them working. Read on to find out more or just go to the solution.

On arrival, I confirmed the drives and enclosure were hot-swappable, took out a drive from the packaging and installed it in the VRTX enclosure. Nothing. No power, no spin up, nothing. The drive was completely powerless. I took every one of the other four drives and connected them and nothing. No spinning up.

Just as a matter of reference, I had all the firmware that I could see updated, and this is what I was working with post-update:

The CMC Firmware: 3.00.200.201708033700

The Chassis Infrastructure Firmware: 2.21.A00.201510302495

Every Server’s iDRAC firmware: 2.52.52.52

Storage Controller: Shared PERC8 (Integrated 9)

Storage Controller Firmware: 23.14.06.0013

When sending detail to Dell, I broke down everything I did:

1. Inserted one new drive hot, not powering on not spinning up,

2. Updated all firmware on the VTRX that seemed available using the Dell Repository tool.

3. Tested new drive in our (newer) server model number: PowerEdge 740xd – the drive was recognized on a hot insert and I was able to start the RAID creation process with this drive.

4. Inserted all 5 new drives into every slot, none powered on or were recognized

5. Looked for settings that might control power to drive slots on the backplane, these don’t seem to exist.

You’ll notice I mentioned the PowerEdge 740xd; that comes with a PERC H730p controller which can be easily confirmed to support a bandwidth of 12gps.

After all of this, I was stumped. Dell continued to insist the drives were compatible, yet everything I was seeing said no. So we sent these drives back and received a set of 5 new 8 TB drives. Again these drives would not power on. This was getting out of hand. Back to Dell, and they decided they’d send a technician out with two different 8 TB drives than what we’ve previously attempted. Again, nothing.

As a matter of complying with the support request, I also did a CMC Reset. It was clear this wouldn’t blow away any of the current RAID configurations, so no problem. I did that and no change. Thus far, four different 8 TB drives were no powering on in the VRTX even though Dell appeared to insist they were compatible.

So, what’s going on here?

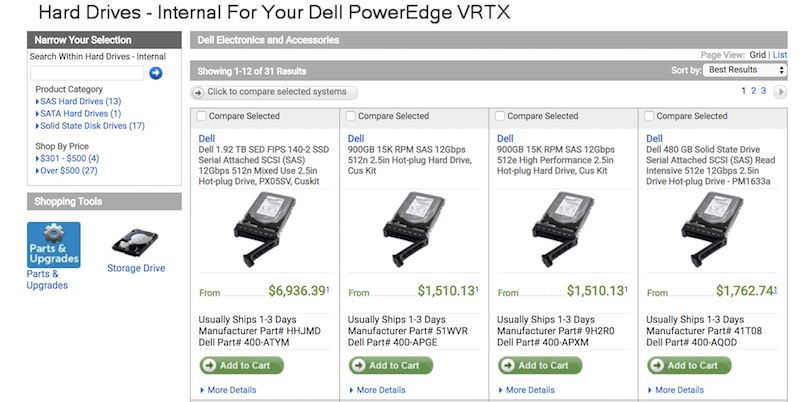

What seems most obvious is compatibility. Looking at the VRTX RAID controller, its a PERC8. Based on information widely available, it appears the PER8 runs on “bandwidth [of] (3Gbps or 6 Gbps)” The drives Dell sent were all using the 12 Gbps standard. It would seem these two were not compatible, but no one at Dell could confirm this. Even more curious, a search on the Dell accessories site for the Poweredge VRTX yields all kinds of 12Gbps drives as options. In fact, you’ll have to look for 6 Gbps on that list. Could this be all that Dell did internally while assessing compatibility?

It does seem possible that a 12 gb/s drive would work as the first (0) drive attached to the RAID controller, but I wasn’t going to be able to test that without potential destruction of the currently configured RAID. I continued for information that might confirm or refute my hypothesis that this was all compatible.

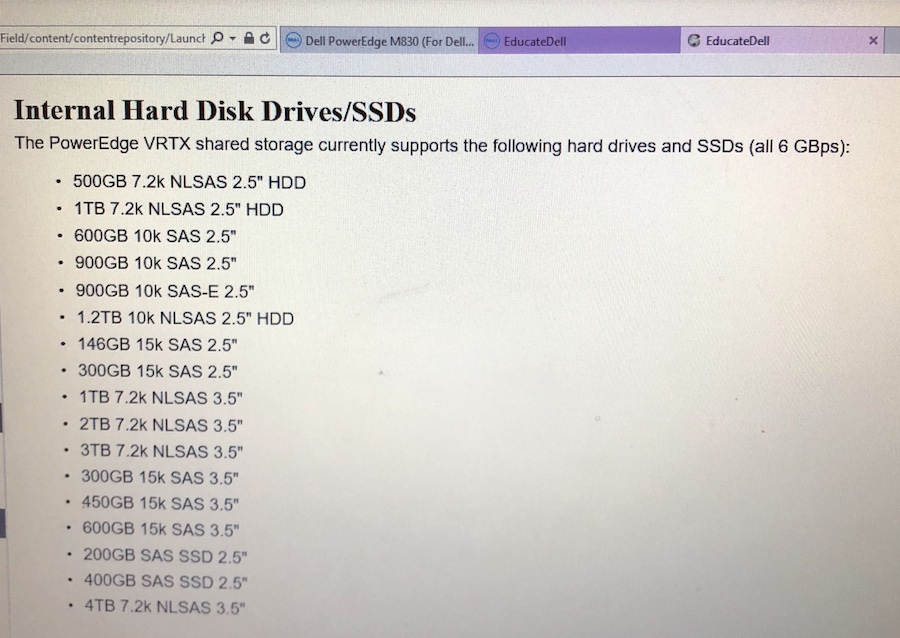

Then, I found an interesting piece of information in a field manual (of all places). This information comes as part of the “EducateDell” service manual. I sure wish I had this kind of information available on the public Dell site. It lists the drives compatible with the VRTX enclosure and general drive sizes.

You’ll see no reference to bandwidth here, but what really sticks out is the lack of an 8 TB drive option. Is that because this information is old, or because there was no 6gbs 8TB drive made for Dell, and thus that size was never compatible? It sure looks like it. Is there a spec-upgraded model of VRTX out there that includes a PERC9, and thereby support the 12gbs standard? I don’t know. Could there be a specific middle firmware that Dell made to step down to 6gps whenever it encountered 12gbps drives? If so, none of this has been confirmed by Dell, who seem steadfast on compatibility.

It seems, at least in summer of 2018, the the VRTX product line is headed for pasture. Looking at the Dell page for the server provides no options for configuration and the product itself, not seeing an update since 2013. Another modular option, the PoweRedge FX looks to be supersede the VRTX as it seems more cross-functional. Some of the most important details to come from Dell was how they tested with 8TB drives. There was the screenshot showing a VRTX holding 2 TB drives in front of an 8TB drive. So, this was clearly possible.

Continued…

Yes, Dell continued to work on this, sending various different 8 terabyte drives to test, though non of them actually worked out. I was stating to think it would be smart to just request drives that supported the 6Gbps standard and move on. To Dell’s credit, they stuck with me, even though they hadn’t fixed the problem.

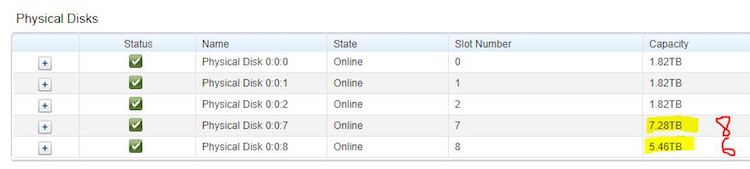

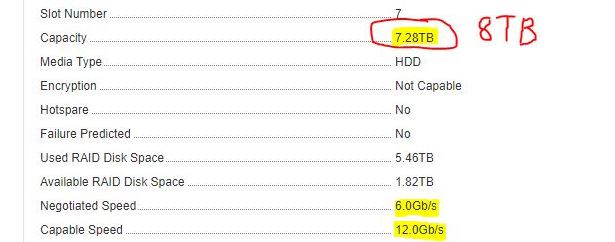

Nice touch pointing out the drives sizes with red highlighter too. Thanks, Dell guy. Another important detail was how the enclosure and controller handled the drives. As I had suspected, there is some kind of negotiation happening there (and clearly my server wasn’t doing it). Here’s what that looks like in the CMC:

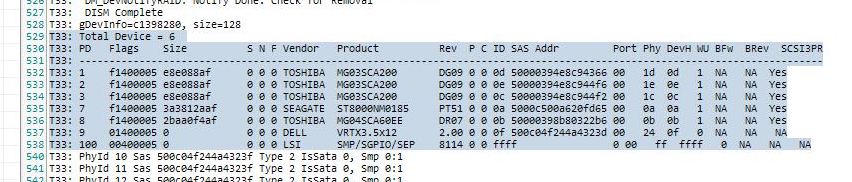

Then, the TTY log was included in a screenshot. I wasn’t sure what Windows application he was using to get this, but this log is where I eventually found my solution:

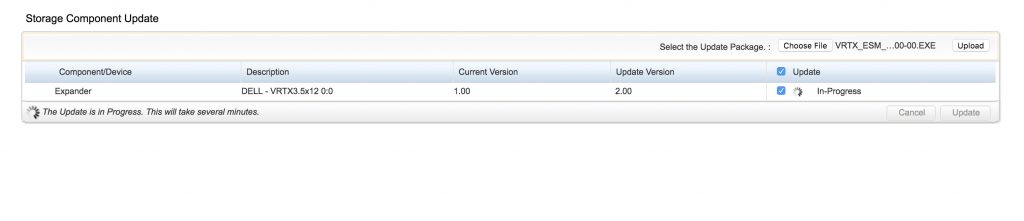

If you look closer, you’ll see the line showing “DELL VRTX3.5X12” and the Rev listed as 2.0. When I saw that, I immediately suspected this was something, possibly a newer hardware revision in that VRTX that we’d need to physically replace. Going back to my server, I noticed that Backplane Expander Firmware was actually version 1.0. At the very least, I needed to update this so I did. Take note, that when you do update this component, you’re uploading an EXE file. Don’t download the EXE thinking you need to extract something; just upload the EXE.

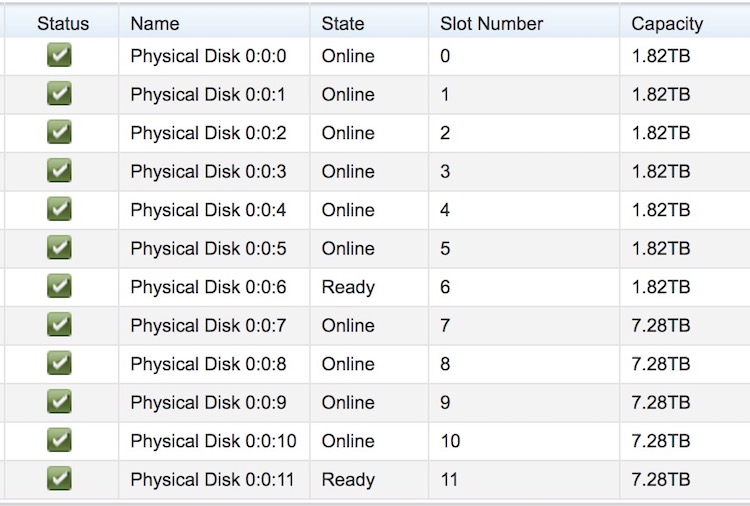

And, I did and managed to get the Backplane Expander updated to 2.0. Then, without even restarting the server, CMC, or any other component, I added an 8TB drive and it worked. Here’s a look at my full enclosure with 2TB and 8TB drives mixed together:

So, the solution to 8TB+ (12Gbps) drive support is to update the Backplane Expander Firmware to at least the 2.0 level. You can get the 2.0 update here and the update’s filename (for Windows) is VRTX_ESM_Firmware_DMR89_WN32_2.00_A00-00.EXE. Keep in mind this will likley be replaced by a newer update in time.